Why More Analysts Won’t Solve Your SOC’s Alert Problem

By Rich Perkins, Principal Sales Engineer, Prophet Security

Your security spend has roughly doubled in six years. Your time-to-investigate and respond hasn’t moved. Your CFO is asking why the security headcount keeps growing while the metrics that matter to the business don’t.

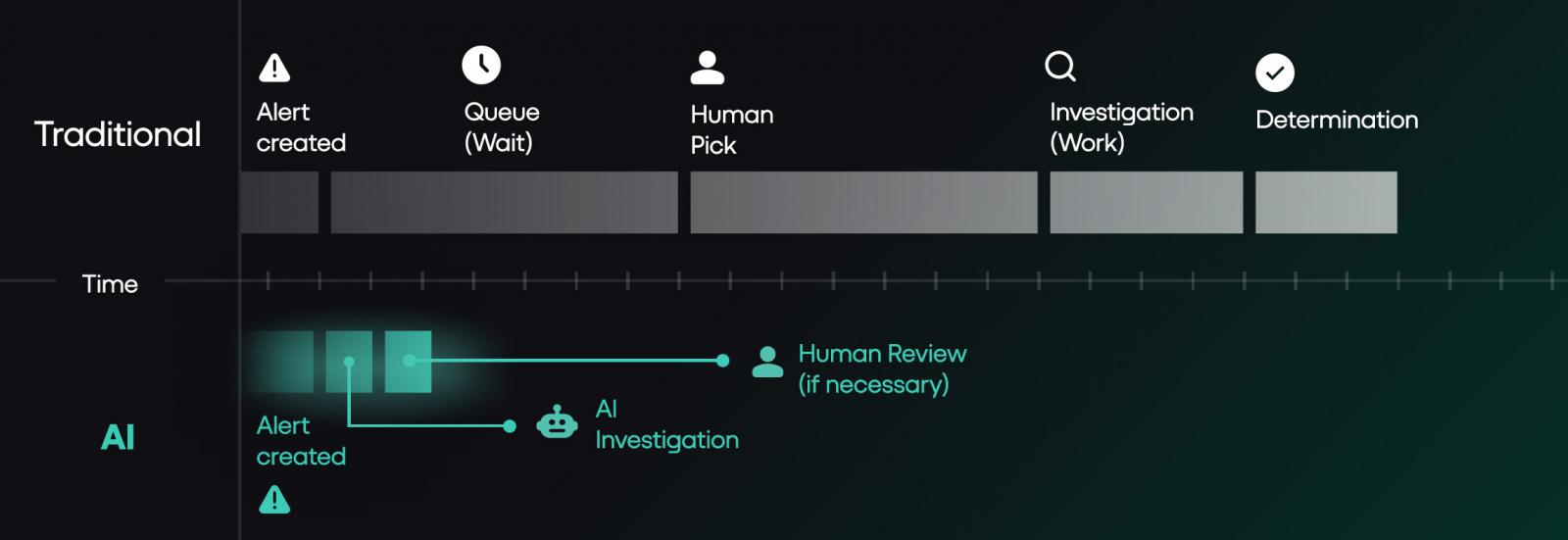

The architecture under your SOC is the reason. Not your team. Not your tooling investment. Not your hiring funnel. The operating model your program inherited assumed human-driven alert triage at the volume the business was producing five years ago, and the business stopped producing alerts at that volume a long time ago.

This is a piece about why hiring more analysts won’t close the gap, what changes when you fix the model instead, and the specific limitations and questions that should shape any AI SOC evaluation. It includes a four-question diagnostic you can run on your own program in the time it takes to finish a coffee.

The math the industry doesn’t want to admit

Google Mandiant’s recent M-Trends reporting puts global median dwell time at 14 days. The same report found that in 2025 the “hand-off” window between initial access and subsequent transfer to secondary threat group collapsed to just 22 seconds, a 95% drop from the 8 hours from 2022. Crowdstrike’s 2026 Global Threat report uncovered similar trends, with the average breakout time falling to 29 minutes, from initial access to exfiltration.

IBM’s most recent Cost of a Data Breach research puts the average time to identify and contain a breach in 2025 at 241 days, with an average cost of $4.88 million. That’s a drop of 16% from 2020, when the time to identify and contain a breach stood at 281 days. Those numbers have not improved at the pace security spending would suggest, despite that spending having roughly doubled in five years, nor have they kept up with the shorter “breakout” or “hand-off” window

This isn’t framed to scare defenders into chasing the next hype. It’s the operating reality. Money in, complexity in, but the curve from detection to investigation and containment barely moves.

SOC teams have already done the obvious efficiency moves. They tier severity. They auto-close known-benign alert classes. They suppress noisy detection rules. They tune. They route. That’s not the problem.

The problem is that even after all of that work, the volume that lands on humans for actual investigation still exceeds what humans can investigate at the depth required. We’ve written an entire ebook on how the SOC queue is the breach, which you can download here.

In the deployments I’ve worked across, the post-tiering volume that hits human triage typically lands in the 120 to 150 alerts per day range. At 20 minutes per investigation including documentation, that’s 40 to 50 analyst-hours daily. SOC teams of 5 to 10 analysts can cover the top of that range during business hours, leaving the rest of the queue for the next shift, the next day, or never.

That’s the gap that doesn’t close with more headcount. You can’t hire enough analysts to investigate 100% of post-tiering volume at the depth the work requires. You can hire your way to better coverage at the margins. You cannot hire your way to the model change.

Most breaches don’t trigger a high severity alert. Instead the first signs appear in a low severity alert that gets buried in a queue no human can clear.

This ebook from Prophet Security breaks down why the alert backlog is the actual attack surface, and what changes when AI investigates every alert.

A diagnostic you can run on your own SOC

Before going further, run these four questions on your program. Honestly. The answers map your SOC capacity blind spots more reliably than any vendor pitch will.

1. What percentage of alerts above your defined investigation threshold did your team actually investigate last quarter? If less than 90%, you have a coverage gap that’s hiding real risk. The gap exists because of how the work flows, not because anyone is dropping the ball. More headcount won’t close it.

2. How many detection rules has your team suppressed in the last 12 months without an engineering ticket to replace the coverage? Suppressing noisy rules is healthy tuning. Suppressing them without follow-up engineering to replace what they were watching is debt. Each undocumented suppression is an attack surface you’ve stopped watching, and the threats those rules were designed to catch don’t go away because you disabled them.

3. What was your senior analyst turnover last year, and how long did each replacement take to reach productive contribution? If turnover exceeds 15% or ramp exceeds 6 months, your bench is fragile. You’re one resignation away from operational impact. Tribal knowledge walking out the door is a single point of failure most programs don’t have a remediation plan for.

4. If alert volume doubled tomorrow, what’s the first thing your team would stop doing? The honest answer is the part of your program that’s already underwater. Whatever you’d cut first is what’s currently holding on by a thread. That’s where to focus the operating model conversation.

If three or more of these answers concern you, the productive conversation moves past hiring and into a different question: whether the architecture under your team can carry the program you actually want to run.

What changes when the model fixes

The teams making real progress aren’t the ones hiring more analysts. They’re the ones changing what work humans are required to do at all.

JB Poindexter & Co, an 8,500-employee diversified manufacturer, deployed Prophet AI in 2025. In the first 60 days, they ran 4,407 investigations through the platform with a mean time to investigate under 4 minutes.

That’s 73 investigations per day at depth, against a Mandiant industry median dwell time measured in days. The deployment returned roughly 1,469 hours of analyst time to their team, equivalent to 6.3 analyst-years of investigation capacity at full annualization.

Their CISO, John Barrow, framed the outcome as “faster, more focused, and able to scale without adding immediate headcount.”

The operating model shift in that sentence is what matters. Not “we hired more people.” Not “we worked our existing people harder.” The work no longer required the same number of people.

Cabinetworks ran 3,200 alerts through Prophet AI in 33 days. Six escalated to a human. The unexpected outcome was a 90% reduction in SIEM costs, primarily from no longer needing to ingest and store raw EDR and identity telemetry that had been pulled into the SIEM purely for analyst pivot queries.

When the AI handles those pivots directly against source systems, that ingest tier becomes optional. The line item that gets cut isn’t the obvious one, and most teams don’t model that secondary saving when they evaluate AI SOC tools. They should. For programs running enterprise SIEM contracts in the seven-figure range, the secondary savings often exceed the cost of the AI platform itself.

A second outcome worth noting: when the queue clears, teams stop having to ignore low and medium severity alerts. Most SOCs quietly stop investigating those classes under capacity pressure, even when their security leadership knows the coverage gap matters. A medium-severity alert isn’t risky because it’s medium.

It’s risky because that’s where real attackers hide while your team is buried in critical-severity noise. Bringing the medium and low tiers back into investigation scope is the coverage shift most teams want and very few can resource.

Every deployment requires two to four weeks of focused tuning before reaching steady state.

How CISOs are funding this

The piece a CISO is mentally writing while reading vendor content is the budget request. Where does this money come from?

Three patterns I’ve seen work, in order of CISO political difficulty.

Path one: Unapproved headcount budget. The cleanest funding path. The team has approved or pending headcount the program hasn’t filled, and the AI platform replaces the need to hire that role. Fully loaded cost for a Tier 2 analyst typically runs $180K to $300K depending on market and seniority, which sets the floor for what the AI platform needs to displace to make the math work.

The JB Poindexter pattern fits here. The “scaling without adding immediate headcount” framing is procurement language for “this is what we’re doing instead of approving the next hire.”

Path two: SIEM cost reduction. If your team is using the SIEM as an investigation pivot workspace (raw EDR telemetry, identity logs, network data), and the AI platform takes over those pivots, the SIEM ingest and storage tier becomes optional.

The Cabinetworks pattern. SIEM ingest savings depend heavily on volume but commonly run 30 to 60 percent of total SIEM spend when investigation telemetry is the main driver.

For programs running mid-six-figure or seven-figure SIEM contracts, this funding path can fully cover the AI platform with savings left over. Get your SIEM renewal cycle date before you start the evaluation, because the timing matters.

Path three: Tool displacement. The hardest political fight. Replacing an existing SOAR, an existing case management workflow, or an existing managed service. The savings vary too widely to generalize, but the displacement creates internal opposition from whoever owns the displaced tool. Plan for it as a 6-month change management project, not a procurement decision.

Most programs end up funding through a combination of paths one and two. Path three is a year-two conversation, not a year-one one.

Where humans still need to lead

I’m pro AI SOC. I work for one. So when I tell you where it isn’t the right tool, take it seriously. Three categories where I’d recommend keeping humans in the lead.

Insider threat investigations where the signal lives in human context, not logs. AI does fine on the DLP-shaped insider threat work where the signal is in telemetry: unusual file movement, exfil to personal cloud, after-hours pulls of sensitive repos. Where it struggles is the harder subset where the deciding signal isn’t in any log.

The PIP that started Monday. The conversation a manager had two weeks ago. The contractor whose contract ends Friday. AI doesn’t have that context. Your humans do.

The right design splits the work cleanly: AI handles the telemetry layer, your team handles the human-context layer. Asking one tool to do both is where these investigations break down.

Novel TTPs with no analog in training data. AI investigation is fundamentally pattern-matching over historical examples. By definition, that’s weakest on attacks that don’t look like anything you’ve seen. Your senior threat hunters earn their keep on the alerts that don’t match anything in the catalog. Don’t outsource that work.

Highly regulated environments where data residency rules dictate where alert telemetry can live. If your compliance posture won’t let metadata leave a specific cloud or country, most AI SOC platforms (Prophet AI included) require real architecture work to fit. Some can’t fit at all. Don’t let any vendor wave that concern away with a slide.

If you’re evaluating an AI SOC tool, ask the vendor exactly where their tool fails. If they don’t have an answer ready, that’s the answer.

Three questions buyers always ask

Three questions come up in almost every evaluation, and they deserve direct answers.

What happens when the AI gets it wrong? Prophet AI documents every step of every investigation. Every question asked, every query run, every piece of evidence pulled, the reasoning that led to the verdict. When a verdict is wrong, the chain of reasoning shows exactly where it went wrong, and your team can encode the correction back into Guidance so the same mistake doesn’t repeat.

That’s a different audit trail than the three-sentence case notes most analysts write under queue pressure today, and it matters more than vendor content typically acknowledges.

Regulators are starting to ask about AI-driven security decisions. Boards are asking about defensible documentation of what the SOC investigated and why. Post-incident reviews are easier to run when the evidence chain is complete by default. The audit trail isn’t a feature. It’s how you keep your seat at the table when the auditor or the board comes asking.

What happens to detection engineering? This is the question senior practitioners ask first, and it’s the right question. You might worry that if AI handles investigation, your team loses the natural feedback loop where analysts catch and tune noisy detections. The honest answer: that work moves explicitly upstream.

Instead of relying on manual triage to spot noise, detecting engineering now use the AI’s comprehensive investigation data as a massive feedback loop, shifting the focus from suppressing alerts to equipping the AI with better context..

To make that upstream work happen, detection engineering shifts from an emergent activity squeezed between alerts to a scheduled discipline owned by the senior analysts whose triage time the AI has freed up. Teams that fail to operationalize that shift see detection quality drift over time. Teams that operationalize it well see detection quality improve, because the engineering happens with intention and dedicated focus.

What does the buying committee look like? AI SOC platforms touch security operations, but the procurement conversation often pulls in IT (for integrations and identity), compliance (for data handling and audit posture), legal (for the data processing agreement and AI-specific contractual terms), and procurement (for vendor risk review).

Plan for that early. Programs that try to push AI SOC through as a security-team decision often hit a six-week delay when compliance discovers the data flow questions in week four. Programs that bring compliance and legal in at the start of the evaluation typically close in half the time.

The vendor-risk question worth asking

One question vendor content almost never addresses directly, and CISOs care about it more than vendors realize: what happens to your program if the AI SOC vendor gets acquired, pivots, or fails? Three-year procurement cycles outlast a lot of vendor strategies.

Three things worth confirming with any AI SOC vendor before signing.

First, data portability: can you export your investigation history, Guidance configurations, and detection logic in a format that survives a vendor change?

Second, runbook independence: are the human-readable Guidance rules you encoded specific to this vendor, or do they document SOC logic your team could rebuild elsewhere?

Third, contractual continuity: what happens to service obligations, data handling, and support during an acquisition or wind-down event?

The third tends to separate the serious vendors from the rest. Most can answer the first two. Few have a clean answer to the third without significant pre-work, which is itself a signal worth noting during evaluation.

Closing thought

Prophet Security’s agentic AI SOC platform operationalizes expert analyst techniques at machine speed across all alert volumes, regardless of severity, to ensure a consistently clear triage queue and preemptively neutralize threats.

If your honest answers to the four diagnostic questions earlier in this piece concerned you, the next conversation isn’t whether AI SOC is the answer. It’s what your senior analysts would actually do with their Tuesday mornings if the triage queue weren’t running them.

That’s the operating model question. Whether you solve it with Prophet Security or someone else, the architecture is what needs to change. Hiring more analysts to triage at machine-generated volume is a strategy that worked in 2018. The math hasn’t worked since 2022.

The teams that change the architecture will get a different conversation with their board next year. The teams that don’t will get the same one they had last year, with a slightly higher number on the spend line and the same number on the time-to-detect line.

Pick the conversation you want to be having.

If your SOC is dealing with alert overload or long investigation times, we’d be happy to show you what Prophet AI looks like in practice. Request a demo or reach out directly to learn more.

Rich Perkins is a Principal Sales Engineer at Prophet Security. Reach him at rich.perkins@prophetsecurity.ai or connect on LinkedIn.

Sponsored and written by Prophet Security.