Why Your Automated Pentesting Tool Just Hit a Wall

By Sila Ozeren Hacioglu, Security Research Engineer at Picus Security.

It’s a story the security community knows well. You bring in a shiny new automated penetration testing tool, and the first “run” is a revelation. The dashboard lights up with critical findings, lateral movement paths you didn’t know existed, and a “Gotcha!” moment involving a legacy service account.

The Red Team feels like they’ve found a force multiplier; the CISO feels like they’ve finally automated the “human element” of security.

But then, the honeymoon ends.

On average, by the fourth or fifth execution, the “new” findings dry up. The tool starts reporting the same stale issues, and the once-shiny dashboard becomes just another screen delivering noise. This isn’t just a lull in activity; it’s the Validation Gap – the widening distance between what organizations actually validate and what they report as validated.

If you’ve started to feel like your automated pentesting tool is overpromising and underdelivering, you’re experiencing a shift in the market. The industry is waking up to the fact that while automated pentesting is a powerful feature, it’s an increasingly dangerous strategy when used in isolation.

The POC Cliff: Where Discovery Goes to Die

This pattern of exciting first run with significantly diminishing returns by run four, isn’t anecdotal.

Security practitioners call it the Proof-of-Concept (PoC) Cliff: the steep drop in new findings volume once the tool has exhausted its fixed scope. It’s not a tuning problem.

By design, automated pentesting solutions deliver their best results in the first run. Within a few cycles, exploitable paths within their scope are exhausted. But that doesn’t mean your environment is secure. It just means the tool has reached its limits, while deeper issues remain untested.

This is the structural ceiling of a tool operating against a deterministic surface. It’s an architectural limitation, not an operational one.

Automated pentesting chains its steps. Step B depends on Step A, and Step C depends on Step B. Once you patch the specific path the tool favors, it’s blocked at Step A, and Steps B through Z never execute. The tool might be able to test 20 lateral movement techniques, but if it gets caught early in the chain, those techniques stay dark. You get the false sense of “mission accomplished” while the rest of your attack surface remains unprobed.

This is where Breach and Attack Simulation (BAS) draws a hard line.

BAS doesn’t chain; it runs thousands of independent, atomic simulations. Each technique gets its own clean execution. A blocked exfiltration test over DNS doesn’t prevent testing exfiltration over HTTPS next. A failed lateral movement technique doesn’t stop the tool from testing 19 others.

One tests the path. The other tests the shield.

Automated pentesting maps attack paths. Picus validates the other five surfaces: detection rules, prevention controls, identity, cloud, and AI.

Findings from your existing tools get normalized into a single prioritized queue. No rip and replace. See it live.

Clearing the Air: BAS vs. Automated Pentesting

To better understand the “why” of the PoC Cliff, we need to address a growing point of confusion in the industry. While Breach and Attack Simulation (BAS) and automated penetration testing share the broad goal of validation, they use different methods to answer different questions.

Think of BAS as a series of independent measurements. It continuously and safely emulates adversarial techniques, malware payloads, lateral movement, and exfiltration, to verify if your specific security controls (firewalls, WAF, EDR, SIEM) are actually doing their jobs.

Its primary mission is to test if your defenses are blocking or alerting on known threat behaviors. Each test stands alone as a check of your defensive strength.

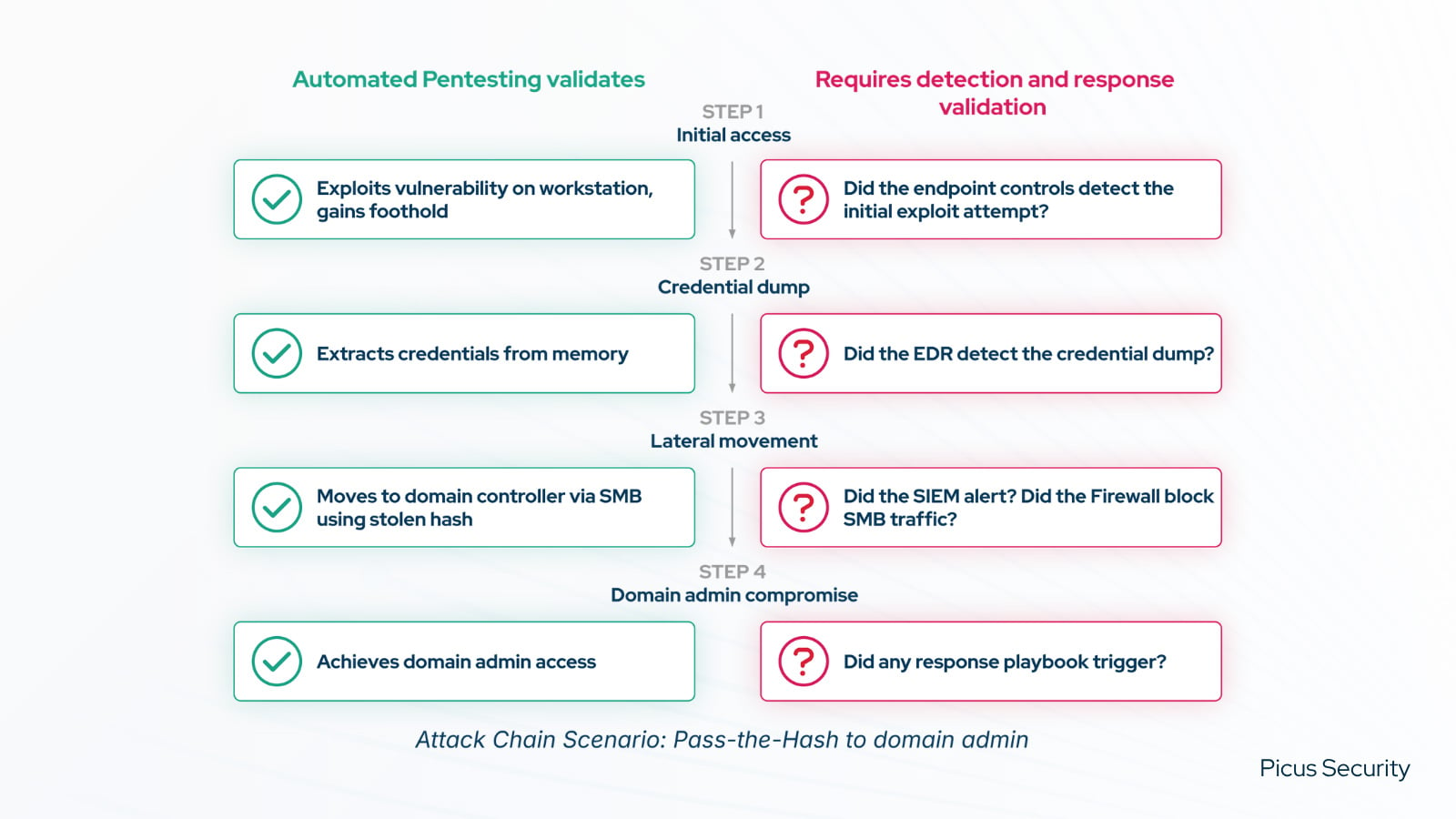

Automated Penetration Testing, by contrast, is directional. It takes a more surgical, adversarial approach by chaining vulnerabilities and misconfigurations together the way a real attacker would. It excels at exposing complex attack paths, such as Kerberoasting in Active Directory or escalating privileges to reach a Domain Admin account.

Though both are often thought of as “validation methods,” the two are fundamentally different in mission and outcomes. One tells you how strong your individual defenses are; the other tells you how far an attacker can travel in spite of them.

The “Simplicity” Trap: Why Pentesting Isn’t BAS

Recently, some vendors have proposed the idea that automated pentesting can, and should, replace BAS. On paper, it sounds great.

In reality, this isn’t an upgrade; it’s a coverage regression disguised as a simplification.

As we’ve just seen, automated pentesting and BAS tools answer fundamentally different questions. To secure a modern enterprise, you need the answers to both:

-

BAS asks: “Are my firewalls, EDRs, WAFs, and SIEMs actually doing their jobs across the entire MITRE ATT&CK framework?” It focuses on the effectiveness of your defensive controls.

-

Automated Pentesting asks: “Can an attacker get from Point A to Point B using known exploits?” It focuses on the success of specific attack paths.

If you swap BAS assessments for automated pentesting, you stop validating your prevention and detection stack.

You might know that an attacker can’t reach your database via one specific exploit, but you have zero visibility into whether your EDR would even blink if they tried a different, non-exploitative technique.

The Six Blind Spots of the Modern Attack Surface

While marketing materials promise “comprehensive” coverage, the reality is that automated pentesting typically only scratches the surface of infrastructure and application paths.

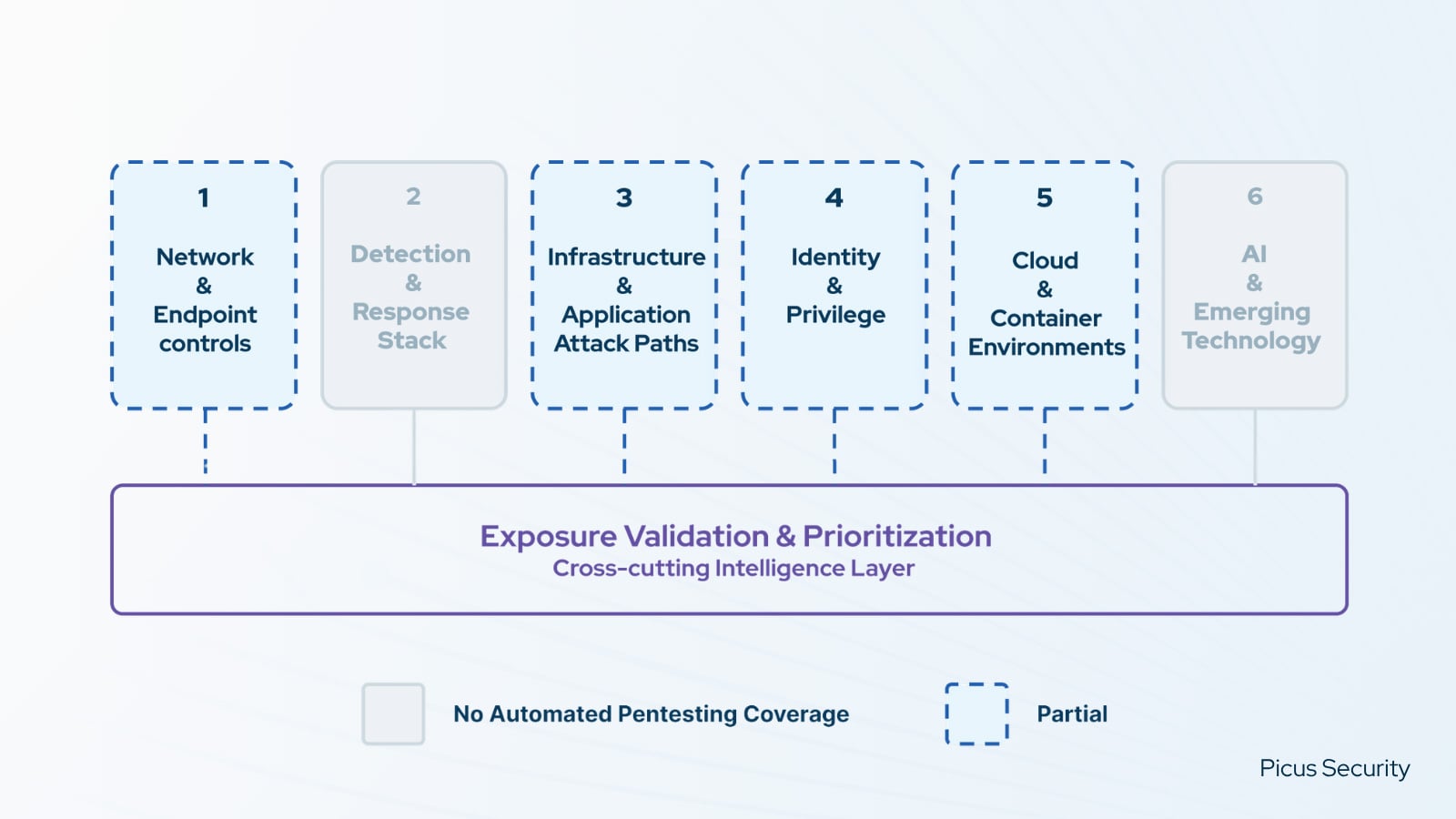

As shown above, two surfaces get no coverage from automated pentesting. Four get partial coverage at best. Not a single surface is fully covered. That’s 0 for 6 completely validated. This creates a massive validation gap where today’s breaches are actually happening:

-

Network & Endpoint Controls: Exploit paths are identified, but there is no confirmation if firewalls, WAF, IPS, DLP, or EDR are actually blocking the threats they’re configured to stop. Controls fail silently, and “configured” is mistakenly equated with “effective.”

-

Detection & Response Stack: Automated pentesting has no visibility into whether SIEM rules and EDR detection logic actually fire. The tool runs as the attacker, it cannot observe the defender. Detection coverage is assumed, not measured.

-

Infrastructure & Application Attack Paths: These tests often hit a “POC cliff.” While infrastructure paths are mapped, complex application-layer attack chains vary in coverage and often stay open and available to adversaries.

-

Identity & Privilege: Existing paths are traversed, but there is no systematic validation of Active Directory configurations, IAM policies, and privilege boundaries.

-

Cloud & Container Environments: Dynamic Kubernetes policies and cloud security controls frequently remain dark and un-revalidated as configurations drift.

-

AI & Emerging Technology: Critical guardrails for internal LLMs against jailbreaks, prompt injection, and adversarial manipulation remain completely unvalidated.

The Intelligence Layer: Exposure Validation & Prioritization

This cross-cutting layer unifies these silos. Matching theoretical CVEs against live security control performance strips out noise, turning the 60%+ of findings falsely classified as high or critical down to the ~10% that are genuinely exploitable, reducing false urgency by over 80%, to produce one defensible, prioritized action list.

The Three Questions You Need to Ask

Understanding this gap is one thing; fixing it requires holding your validation vendors to a higher standard. To cut through the marketing hype and find out what a tool actually delivers, everything distills down to three fundamental diagnostic questions.

Bring them with you to every vendor meeting, every renewal conversation, and every budget review. They work because they are structural, not subjective. Any tool that answers all three with specificity and evidence deserves serious evaluation; any tool that cannot has just shown you where your gap is.

-

Which of my six validation surfaces does your tool cover, and at what scope within each?

-

How does your platform distinguish exploitable vulnerabilities from theoretical ones, specifically using my live security control performance data?

-

How does your platform normalize findings from my other tools into a single, deduplicated, prioritized view and action list?

The difference between “we chose not to validate this surface” and “we didn’t realize it wasn’t being validated” is the difference between risk management and exposure.

The Bottom Line

Your attack surface doesn’t care which vendor’s logo is on the tool.

It only cares whether it has been tested. If your current automated pentesting deployment is leaving critical surfaces in the dark, it’s time to remap your strategy.

Our latest practitioner’s guide, The Validation Gap: What Automated Pentesting Alone Cannot See, provides the complete diagnostic framework you’ll need to audit your own coverage, diagnose where your coverage plateaus, and build a unified validation architecture.

Start with the six surfaces. Score your own coverage. Knowing where your tools stop is how you decide where to go next.

Sponsored and written by Picus Security.